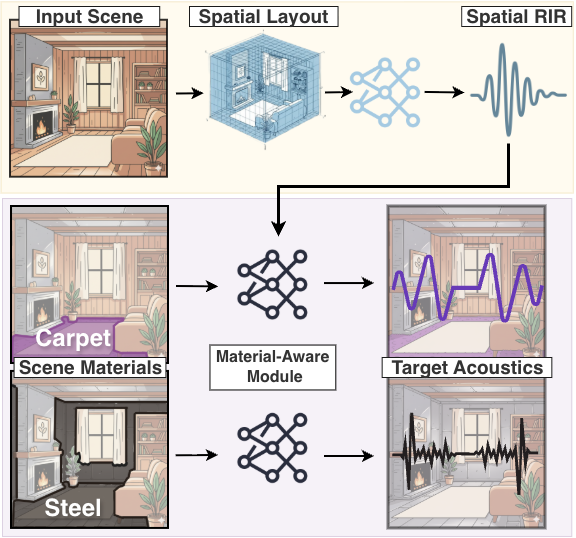

Rings like gold, thuds like wood! The sound we hear in a scene is shaped not only by the spatial layout of the environment but also by the materials of the objects and surfaces within it. For instance, a room with wooden walls will produce a different acoustic experience from a room with the same spatial layout but concrete walls. Accurately modeling these effects is essential for applications such as virtual reality, robotics, architectural design, and audio engineering. Yet, existing methods for acoustic modeling often entangle spatial and material influences in correlated representations, which limits user control and reduces the realism of the generated acoustics. In this work, we present a novel approach for material-controlled Room Impulse Response (RIR) generation that explicitly disentangles the effects of spatial and material cues in a scene. Our approach models the RIR using two modules: a spatial module that captures the influence of the vision-inferred spatial layout of the scene, and a material module that modulates this spatial RIR according to a user-specified material configuration. This explicitly disentangled design allows users to easily modify the material configuration of a scene and observe its impact on acoustics without altering the spatial structure or scene content. Our model provides significant improvements over prior approaches on both acoustic-based metrics (up to +16\% on RTE) and material-based metrics (up to +70\%). Furthermore, through a human perceptual study, we demonstrate the improved realism and material sensitivity of our model compared to the strongest baselines.

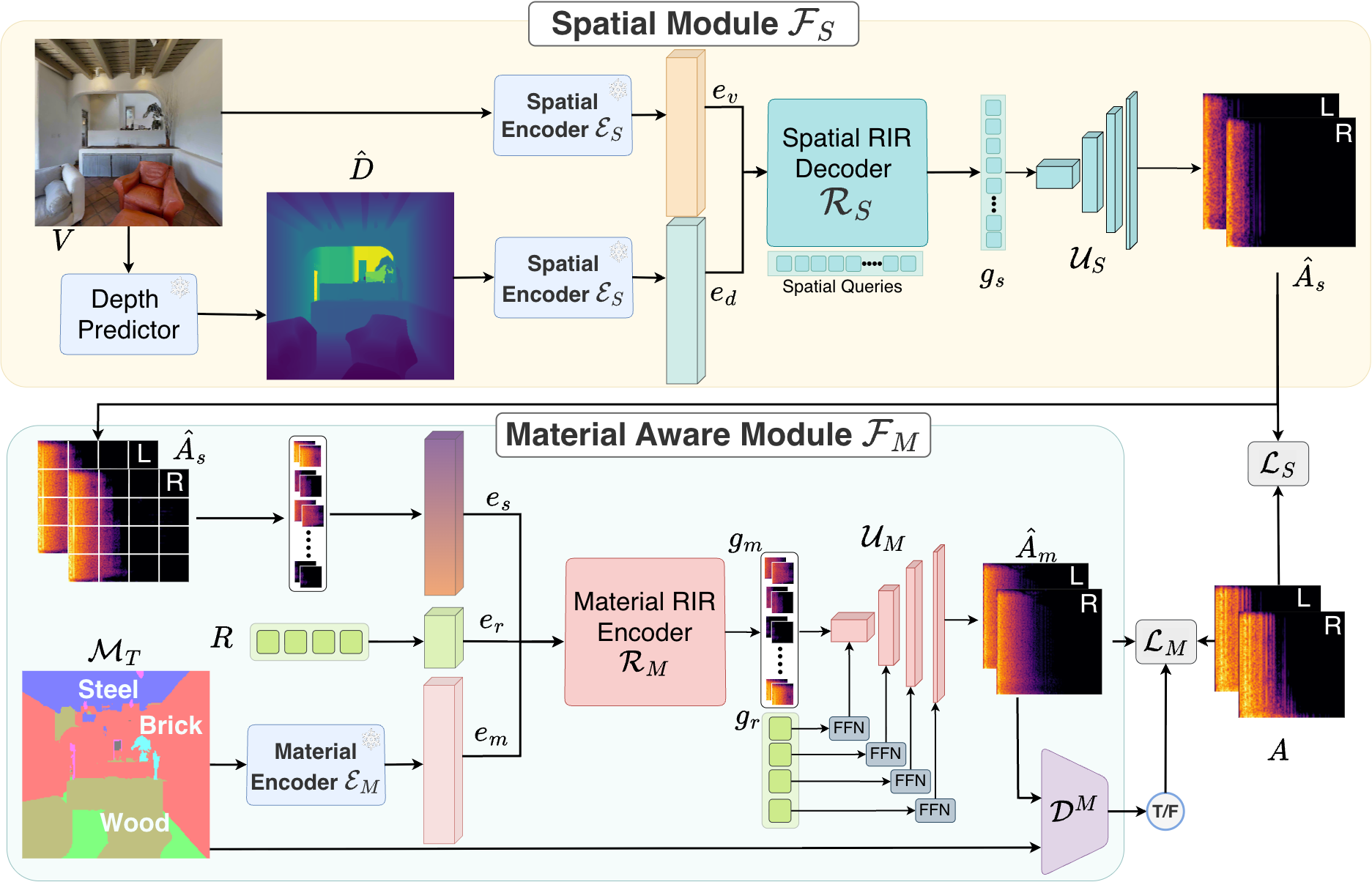

Our MatRIR model F for material-conditioned RIR prediction. Given an RGB image V of a 3D scene, MatRIR uses the Spatial Module FS to extract geometric cues from $V$ and predict a spatially accurate initial estimate of the target RIR, ÂS. Then, for a material segmentation mask M, which specifies a custom object material configuration for V, the Material-Aware Module FM modulates ÂS to produce the final RIR estimate, ÂM. We train the model with a combination of losses that enforces acoustic consistency between ground-truth RIR A and ÂM and promotes cross-modal correspondence between ÂM and M, which substantially improves performance.